The Situation

In January 2024, we started a conversation with a regional retail group whose e-commerce platform processed roughly 40,000 orders per day during normal periods, scaling to 200,000+ during major sale events. Their backend ran on Oracle Database 19c, hosted on-premise in two co-location data centers. The database held approximately 50TB of transactional data: orders, inventory, customer records, fulfillment history, and seven years of audit logs that compliance required them to retain.

The problem was not that the current setup was failing. It was that the current setup was becoming untenable. Their Oracle licensing costs had increased 34% over the previous three years, driven partly by Oracle's aggressive audit practices and partly by genuine growth in their data volumes. The hardware refresh cycle on their co-location servers was overdue, and the capital expenditure required to refresh on-premise while also investing in their product development roadmap was not feasible. Cloud migration was the right strategic decision.

The hard constraint that made this project genuinely difficult: they could not afford downtime during their peak season window, which runs from September through January. We had a project start date of February 2024 and a hard requirement to complete production cutover before the following September. That gave us approximately seven months to migrate 50TB of an active production database without a maintenance window.

Why Aurora, and Why the Schema Question Was Not Simple

The target platform decision was made before we engaged: the client's CTO had evaluated Aurora PostgreSQL against Aurora MySQL and RDS Oracle (which would have preserved the Oracle dialect) and landed on Aurora PostgreSQL for a combination of cost, performance, and long-term maintainability reasons. We agreed with the conclusion but the implications were significant: migrating from Oracle to PostgreSQL is not a lift-and-shift. It is a database port that requires attention to Oracle-specific syntax, data types, and procedural logic that does not translate directly.

The client's Oracle database contained 847 stored procedures, 130 database triggers, and 23 packages - a substantial amount of business logic that had accumulated over 11 years of development. The first phase of the project was a complete audit of this procedural layer to identify Oracle-specific constructs that would require rewriting. AWS Schema Conversion Tool (SCT) gave us a head start: it automated conversion of roughly 60% of the stored procedures with no manual intervention required. The remaining 40% required varying degrees of manual rewrite, from minor syntax adjustments to complete logic restructuring for cases where the Oracle and PostgreSQL execution models differ fundamentally.

The most common conversion challenges were: Oracle's DECODE function (replaced with CASE expressions), Oracle sequences versus PostgreSQL sequences (different syntax, compatible semantics), Oracle's implicit TO_DATE conversion behavior (PostgreSQL is stricter about date format strings), and several stored procedures that used Oracle's CONNECT BY hierarchical query syntax (replaced with PostgreSQL's recursive CTEs). We used Orafce, a PostgreSQL extension that implements Oracle compatibility functions, to reduce the rewrite surface area - it does not eliminate the work, but it meaningfully reduces it for common patterns.

The Migration Strategy: Dual-Write and Shadow Reads

A 50TB database migration without a maintenance window requires a strategy that maintains two consistent databases simultaneously while traffic continues flowing to the source system. We used a dual-write approach with shadow reads for validation, built on top of AWS Database Migration Service (DMS) for the continuous replication layer.

The architecture worked as follows. DMS was configured in ongoing replication mode to replicate changes from Oracle to Aurora PostgreSQL in near real-time - typically with 2-5 second lag under normal load, expanding to 15-20 seconds during peak traffic periods. During the migration period, the application wrote exclusively to Oracle and read exclusively from Oracle. Simultaneously, a shadow service consumed a copy of all write operations and replayed them against Aurora PostgreSQL, allowing us to validate that the two databases converged to the same state.

The shadow read component was the more technically interesting piece. We built a validation service that periodically sampled records from both databases - 50,000 records per hour, selected by hash to ensure even distribution across the data set - and compared field-by-field. Discrepancies were logged with full context: the record identifier, the field name, the Oracle value, the Aurora value, and the timestamp of the last modification in each system. This gave us a continuous picture of data integrity during the migration period and allowed us to catch schema conversion issues that had not appeared in the development environment.

We found eleven categories of discrepancy during the shadow read period. Most were minor: numeric precision differences where Oracle's default numeric type has different precision behavior than PostgreSQL's, timestamp timezone handling differences, and NULL versus empty string handling in legacy data. Two were more significant: a date arithmetic stored procedure that behaved differently across the two systems in edge cases involving daylight saving time boundaries, and a trigger that had an Oracle-specific behavior for handling duplicate key violations that we had not fully replicated in the PostgreSQL version. Both were caught and corrected during the parallel operation period, before any risk of data corruption in production.

The Phased Approach: Four Stages to Cutover

Rather than treating this as a single event, we structured the migration as four distinct phases with defined go/no-go criteria at each stage.

Phase 1 - Schema and static data (weeks 1-4): Migrate the database schema, reference data tables, and historical archive data that is not actively modified. This established the Aurora environment and allowed us to validate the schema conversion against real data volumes without any risk to production. We used the initial DMS full load for this phase, which took 11 days to complete for the 50TB dataset. DMS had no difficulty with the volume, but we ran into IAM permission issues and VPC routing configuration issues that consumed roughly two days of debugging - predictable for anyone who has worked with DMS before, but worth planning for.

Phase 2 - Ongoing replication and shadow validation (weeks 5-14): Enable DMS ongoing replication and the shadow read validation service. This phase ran for ten weeks - longer than originally planned, because we used the discrepancy data to drive iterative improvements to the schema conversion. The go/no-go criterion for Phase 3 was zero discrepancies in a 72-hour continuous monitoring window. We hit that criterion at week 14.

Phase 3 - Application layer preparation (weeks 15-22): Migrate the application connection layer to support dual database connections, implement feature flags that would allow us to shift read traffic to Aurora before shifting write traffic, and complete load testing of the Aurora environment under simulated peak traffic conditions. Aurora is not a drop-in Oracle replacement from a performance perspective - the query optimization paths are different, and several queries that performed well on Oracle required index additions or query restructuring for Aurora. This work was done in Phase 3, before any production traffic touched Aurora, using production data clones for load testing.

Phase 4 - Cutover (week 24, executed over a single weekend): The actual cutover was executed in stages. First, all read traffic was shifted to Aurora (using the feature flags built in Phase 3) while writes continued to Oracle. We monitored for 4 hours under live read traffic before proceeding. Then, at 2am Sunday when order volume was at its daily minimum, we stopped the application, allowed DMS to drain the final replication lag to zero, promoted Aurora to primary, updated the application configuration to write to Aurora, and restarted the application. Total downtime: 8 minutes. Oracle remained available for 14 days post-cutover as a rollback option, with one-way replication from Aurora back to Oracle to keep it current during the rollback window.

Performance Tuning on Aurora

The performance story had two chapters. The first, immediately after cutover, was concerning: several high-traffic queries were running 40-60% slower on Aurora than they had on Oracle. The second chapter, after two weeks of tuning, was better than we had initially projected.

The slowest query was an order search that the application ran frequently with a combination of filter parameters that created a poor execution plan on Aurora's query optimizer. The Oracle execution plan for this query used an index combination that Aurora's planner did not choose. Adding a composite index and rewriting the query to use CTEs rather than nested subqueries brought the Aurora execution time below the Oracle baseline by 12%. A similar pattern appeared in 8 other queries - each required analysis of the execution plan, an index addition or query rewrite, and validation under load.

Aurora's connection pooling behavior also required adjustment. The Oracle environment used connection pooling with very long-lived connections. Aurora, running on PostgreSQL, is more sensitive to connection count and benefits from shorter connection lifetimes and aggressive pool recycling. After tuning RDS Proxy settings and adjusting the application connection pool configuration, connection-related performance issues were eliminated.

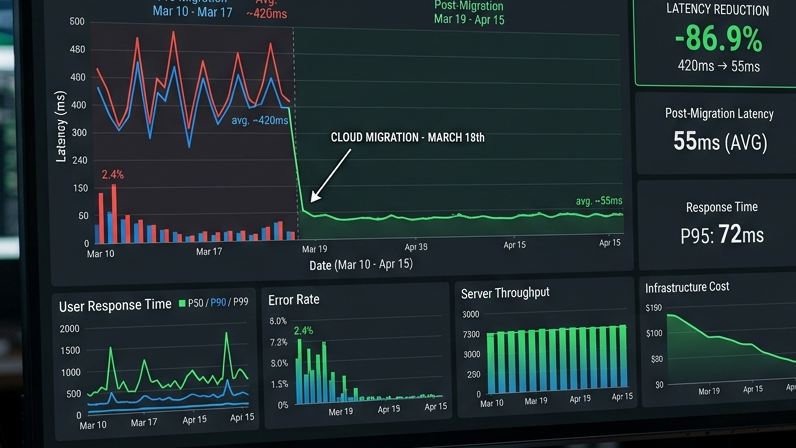

The end-state performance metrics, measured 60 days post-cutover: average query response time improved 18% versus the Oracle baseline. p99 query response time (the slowest 1% of queries) improved 31%, primarily because Aurora's I/O architecture handles high-variance workloads more consistently than the spinning disk storage the Oracle environment was using. Read replicas in two additional availability zones added redundancy that the Oracle setup did not have.

The Rollback Plan That We Did Not Need (But Were Glad We Had)

Any migration of this scope that does not have a credible rollback plan is not ready for production. The go-ahead for Phase 4 cutover was contingent on the rollback plan being tested, not just documented.

The rollback plan had three components. First, Oracle remained available and current for 14 days post-cutover, maintained via one-way replication from Aurora. If we needed to roll back, the process was: stop application writes to Aurora, allow one-way replication to catch up, flip the application connection configuration back to Oracle, and restart. Estimated rollback time: 15 minutes plus replication lag drain time. We tested this in the staging environment twice before the production cutover.

Second, we maintained a database snapshot taken immediately before the Phase 4 cutover. This was the "last resort" option if the replication-based rollback failed for any reason. Restoring 50TB from snapshot takes significantly longer - we estimated 4-6 hours - but the option existed.

Third, the application feature flags built in Phase 3 remained in place post-cutover. If specific application functions showed issues in production that were isolated to certain query paths, we could shift those specific paths back to Oracle reads without a full rollback. This was the most surgical option and was designed to handle "partial success" scenarios where most of the cutover was working but one area needed more time.

The rollback plan was never invoked. This is not a statement about the quality of the rollback plan - it is a statement about what the 14-week shadow validation period accomplished. By the time we cut over, we had high confidence in data consistency because we had been measuring it continuously.

Results and Lessons Learned

Six months post-cutover, the numbers are: Oracle licensing costs eliminated (approximately $1.2M annually), co-location hardware costs eliminated (approximately $380K annually), Aurora running costs at current scale approximately $420K annually. Net annual savings: approximately $1.16M. The migration project cost (including GTEMAS engagement, AWS migration credits, and internal time) was approximately $680K. Payback period: approximately 7 months.

Beyond the cost story, the infrastructure agility improvement is meaningful. The client can now scale Aurora read capacity up and down in response to traffic patterns without hardware procurement lead times. They ran their 2024 peak season on Aurora without incident, processing 215,000 orders on their highest-volume day - a new record - with p99 query latency that was lower than their Oracle baseline had delivered during normal operation periods.

The lessons are predictable to anyone who has done migrations of this scale, but they bear repeating because projects continue to underinvest in them. Shadow validation is not optional overhead - it is the mechanism that identifies conversion errors before they become production incidents. The Oracle procedural layer required significantly more manual rewrite effort than the SCT estimates suggested. Performance tuning on the target platform requires production-scale load testing data, not estimates from development environments. And the rollback plan needs to be tested, not just documented.

If your organization is evaluating a database migration of significant scale - particularly an Oracle to PostgreSQL or Aurora migration - the planning, validation, and tuning work is where the project is won or lost. GTEMAS has executed migrations of this complexity and can help you scope the work realistically from the start.