Something Changed, and Most Companies Missed It

Here is a question worth sitting with: when was the last time your engineering team spent an entire sprint writing code from scratch?

Not configuring something. Not integrating an API someone else built. Not debugging a dependency conflict. Actually writing new business logic, line by line, from a blank file.

For most enterprise teams, the honest answer is "rarely." And that was true before AI tools entered the picture. The daily reality of a modern software engineer was already dominated by reading existing code, navigating complex systems, attending meetings to align on requirements, and wrestling with deployment pipelines. The actual "writing code" part was maybe 30% of the job.

Now AI has compressed that 30% dramatically. Tools like GitHub Copilot, Cursor, and Claude can generate working implementations in seconds. A function that took 45 minutes to write, test, and refine? Done in 3 minutes with a well-crafted prompt. A database migration script? Generated before you finish your coffee.

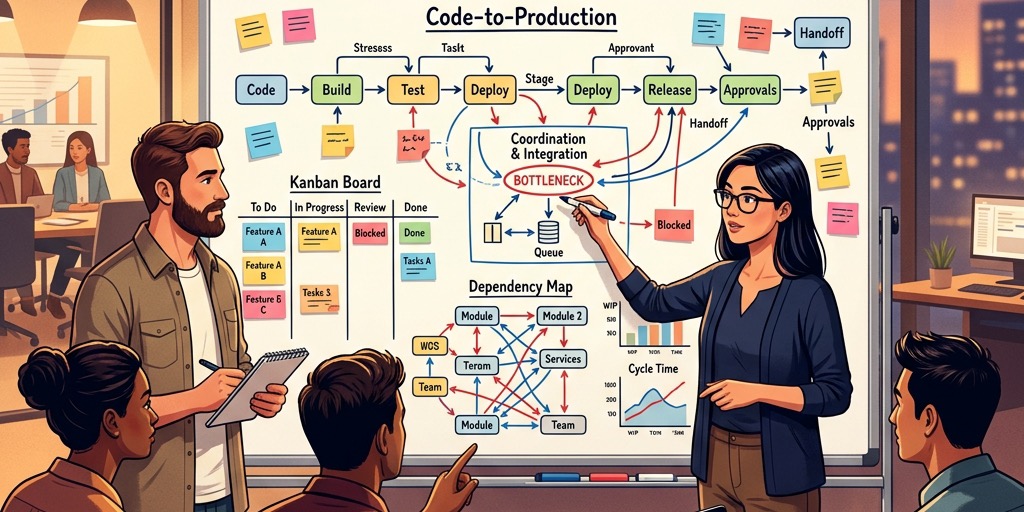

This sounds like great news. And it is. But it also creates a problem that most organizations haven't grappled with yet: if writing code is no longer the bottleneck, then what is? And if your entire delivery model-your estimation frameworks, your team structures, your vendor contracts-was built around the assumption that writing code is slow and expensive, what happens when that assumption breaks?

The Real Bottleneck Was Never the Code

Let's talk about what actually slows down enterprise software projects. If you've been in the industry long enough, you already know the answer. It's not typing speed. It's not even technical complexity, most of the time.

It's alignment.

Getting stakeholders to agree on what they actually want. Translating a business requirement like "we need better customer retention" into a specific set of features, data flows, and user experiences. Managing the gap between what the product owner described in a meeting and what the developer understood three days later when they finally started working on the ticket.

A study by the Standish Group found that 66% of software project failures can be traced back to poor requirements-not poor engineering. The code was fine. The team built the wrong thing, or they built the right thing in the wrong order, or they didn't discover a critical constraint until month four of a six-month project.

This is where AI's impact gets interesting. It's not just making developers faster typists. It's collapsing the feedback loop between "I think I understand what you want" and "here's a working prototype you can actually click through." When that loop shrinks from weeks to hours, everything downstream changes.

What This Looks Like in Practice

Let me give you a concrete example from a project we ran last year.

A financial services client needed a compliance reporting module-the kind of feature that, in the old world, would have started with three weeks of requirements gathering, produced a 40-page specification document that nobody would fully read, and then taken another six weeks to build, with at least two rounds of "that's not what we meant" revisions along the way.

Instead, our team did something different. In a single working session with the client's compliance officer, we recorded the conversation, fed the transcript into an AI system, and generated a structured set of user stories with acceptance criteria within an hour. But here's the part that mattered: we also generated a clickable prototype the same day. Not a polished product-a rough, functional mockup that the compliance officer could interact with.

She clicked through it, immediately spotted three assumptions we'd gotten wrong, and said: "This field needs to show the historical audit trail, not just the current status." That single correction, caught on day one, would have been a two-week rework if discovered during QA testing. We adjusted the prototype in real time. By the end of the week, we had a validated design that everyone agreed on.

The total build time for the final module? Three weeks, including testing and deployment. The old timeline estimate for the same scope? Ten to twelve weeks.

Was the code written faster? Yes, somewhat. But the real time savings came from eliminating the ambiguity loop-the back-and-forth cycle of misunderstanding that eats up most enterprise project timelines.

The Uncomfortable Truth About Estimation

If you manage engineering budgets, this section is for you.

Most enterprise software contracts are still priced on effort: hours worked, story points burned, sprints completed. The implicit assumption is that more effort equals more value. You're buying time, and time is expensive because skilled developers are expensive.

AI breaks this model in an awkward way. If a senior developer can now accomplish in two hours what used to take twelve, should the client pay for two hours or twelve? If the vendor quotes twelve and delivers in two, are they pocketing ten hours of margin? If they quote two, are they undervaluing the architectural thinking that made those two hours productive?

We're seeing this tension play out across the industry. Some vendors are quietly using AI tools internally while still billing at the old rates. Others are racing to the bottom on price, cutting quotes by 50% and hoping volume makes up the difference. Neither approach is sustainable.

The honest answer is that the unit of value needs to change. Instead of buying hours, enterprise clients should be buying outcomes: a working compliance module, a migrated data pipeline, a redesigned checkout flow. The vendor's job is to deliver that outcome reliably and quickly. How they do it-whether a human writes every line by hand or an AI generates 80% of the boilerplate-shouldn't matter to the buyer. What matters is quality, reliability, and speed.

At GTEMAS, we've been moving toward outcome-based engagement models for exactly this reason. We scope work by deliverables and milestones, not by headcount and hours. It's a harder model to sell-clients are used to counting bodies-but it aligns incentives properly. We're rewarded for being efficient, not for being slow.

What Changes for Engineering Teams

Here is something that doesn't get discussed enough: AI tools don't reduce the need for senior engineers. They increase it.

This sounds counterintuitive, so let me explain. When AI can generate code quickly, the volume of code produced per day goes up dramatically. Someone has to review that code. Someone has to decide whether the AI's architectural choices make sense in the context of the larger system. Someone has to catch the subtle bugs that AI introduces-the kind where the code compiles and passes basic tests but has a race condition that only surfaces under production load.

Junior developers can prompt AI effectively for isolated tasks. But evaluating whether the output is correct in a broader system context? That requires deep experience. You need to know what "good" looks like to recognize when the AI is giving you something that's merely "functional."

We've restructured our teams around this reality. Instead of the traditional pyramid-a few seniors overseeing many juniors-we now run flatter teams where every engineer is expected to operate at an architectural level. The AI handles what junior developers used to do: writing boilerplate, generating test scaffolds, handling routine migrations. The humans focus on system design, security review, performance optimization, and the judgment calls that AI simply cannot make reliably.

This has real implications for hiring. We look for different things now. Five years ago, a coding interview might test whether a candidate could implement a binary search tree from memory. Today, we're more interested in whether they can look at a generated codebase and identify what's missing-the error handling that the AI skipped, the edge case it didn't consider, the security vulnerability hiding in a dependency it chose.

"The most dangerous code is code that works perfectly in testing and fails silently in production. AI is very good at writing code that passes tests. Knowing when to distrust that is a human skill."

The Governance Problem Nobody Wants to Talk About

There's a darker side to all of this that the industry is mostly ignoring.

When AI generates code, who's responsible for it? If an AI-generated function introduces a security vulnerability that leads to a data breach, does the liability sit with the developer who accepted the suggestion, the team lead who approved the pull request, or the vendor who deployed the tool?

Most enterprise legal frameworks haven't caught up to this question. In regulated industries-healthcare, finance, government-this isn't an abstract concern. Auditors want to know who wrote the code and whether it was reviewed by a qualified human. "An AI wrote it and a developer clicked approve" isn't going to satisfy a SOC 2 auditor.

We take this seriously because our clients operate in exactly these environments. Every piece of AI-generated code that enters our delivery pipeline goes through the same review process as human-written code: peer review, automated security scanning, and explicit sign-off by a senior engineer. We maintain full traceability-you can see exactly which parts of the codebase were AI-assisted and who reviewed them.

This isn't bureaucracy for its own sake. It's the foundation of trust. If you can't audit your AI-assisted code with the same rigor you apply to human-written code, you shouldn't be using AI in production.

Where This Is All Heading

We're still early. The AI tools available today-as impressive as they are-represent the first generation. They'll get better. The models will become more context-aware, better at understanding large codebases, more reliable in their architectural suggestions. The gap between "AI-assisted" and "AI-First" development will narrow.

But the fundamentals won't change. Software delivery has always been, at its core, a communication problem. The hard part is understanding what humans want and translating that into something a machine can execute. AI is making the execution cheaper, but the understanding remains expensive-and human.

What will change is the shape of successful delivery partnerships. The vendors who thrive will be the ones who've genuinely integrated AI into their workflows-not as a marketing gimmick, but as a structural advantage that lets them deliver more value per dollar. They'll price on outcomes, staff with senior-heavy teams, and maintain the governance frameworks that enterprise clients require.

The vendors who lose will be the ones still selling warm bodies and counting hours, hoping their clients don't notice that a team of five with AI tools can outperform a team of fifteen without them.

At GTEMAS, we've made our bet. We're building our delivery organization around the assumption that AI is a permanent part of the engineering landscape, not a trend that might fade. Our teams, our processes, our commercial models-they're all designed for a world where human judgment and machine capability work together. Not because it sounds good in a pitch deck, but because it's the only model that actually works when you sit down and run the numbers.

The companies that figure this out first will have a meaningful advantage. Not just in speed-although they'll be faster. In quality, in predictability, and in the ability to adapt when requirements inevitably change halfway through a project. Because they will. They always do. The question is whether your delivery model is built to handle that gracefully, or whether it breaks every time someone says, "Actually, we need it to work a little differently."

That's the real promise of AI in enterprise delivery. Not faster typing. Better thinking.