The Paradox of Modern Engineering

For years, the software industry accepted a hard truth: "Good, Fast, Cheap - pick two." Every project manager knew the iron triangle. Every client was told to choose. The constraint felt like physics.

Generative AI has broken this triangle - not by magic, but by making specific types of work dramatically cheaper. Writing boilerplate code, generating test cases, drafting API documentation, scaffolding infrastructure configurations: these tasks used to consume a significant portion of every engineering sprint. They no longer need to. The result is that teams can deliver higher quality software, faster, and more cost-effectively - simultaneously - if they have redesigned how their delivery process actually works.

That last condition matters. Buying a GitHub Copilot license and hoping developers will figure it out is not a strategy. It produces marginal gains. The organizations seeing 30-50% reductions in delivery effort have done something more deliberate: they have re-engineered every phase of their software lifecycle around AI-augmented workflows. That is what the GTEMAS Velocity Engine is, and this is how it works.

Phase 1: Requirements and Discovery (-30% Effort)

The traditional approach: Two to four weeks of workshops. Whiteboard photos that nobody can read a month later. A PRD (Product Requirements Document) that the development team interprets differently from the product team, which interprets it differently from the business stakeholders. Scope creep emerges not because stakeholders change their minds, but because the requirements were never precise enough to reveal the gaps until someone built the wrong thing.

The GTEMAS approach: We instrument the discovery process itself. Stakeholder interviews are transcribed in real time. Custom LLM agents parse these transcripts to automatically generate structured user stories in Jira format, complete with Gherkin-syntax acceptance criteria. The same agents produce process flow diagrams as Mermaid.js code, and run an edge case analysis - essentially asking the questions a thorough QA engineer would ask at the end of the project, but asking them before a line of code is written.

The output of a discovery session is no longer a meeting summary. It is a validated, structured set of requirements that developers can act on the same week. Ambiguity that would have surfaced as a rework cycle in week eight surfaces instead in week one, when the cost of fixing it is an hour of conversation rather than a sprint of re-engineering.

Across our client projects, this phase alone reduces requirements-related rework by roughly 30%, and that estimate is conservative for projects with complex business logic or multiple stakeholder groups.

Phase 2: Architecture and Design (-25% Effort)

The traditional approach: Senior architects spend days drawing diagrams and debating patterns. The output is often over-engineered relative to the actual requirements, because architects are designing for hypothetical scale rather than the actual system.

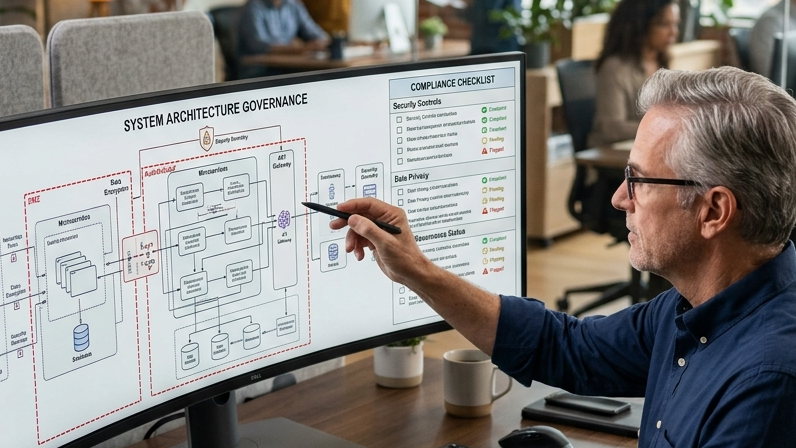

The GTEMAS approach: Our architects use AI to simulate system loads and model architectural trade-offs before committing to patterns. We feed validated requirements into our internal Architecture Oracle - a custom toolchain that suggests optimal database schemas, API definitions in Swagger/OpenAPI format, and infrastructure-as-code scaffolding in Terraform. The human architect shifts from "drafter" to "reviewer and decision-maker," focusing on the trade-offs that actually require judgment: security boundaries, data sovereignty, integration complexity, and long-term maintainability.

The boilerplate of architecture - the part that is standard and predictable - is handled by AI. The judgment calls - the part that defines whether the system will hold up under real conditions - remain with experienced engineers. This is not a reduction in architectural rigor. It is a reallocation of architect time toward the decisions that matter.

Phase 3: Coding and Implementation (-50% Effort)

The traditional approach: Developers spend roughly 60% of their time on non-creative work: looking up syntax, writing boilerplate, debugging dependency conflicts, translating requirements into scaffolding code that any competent engineer would write the same way. The remaining 40% - the actual problem-solving, the architectural decisions, the edge case handling - is where the real value sits.

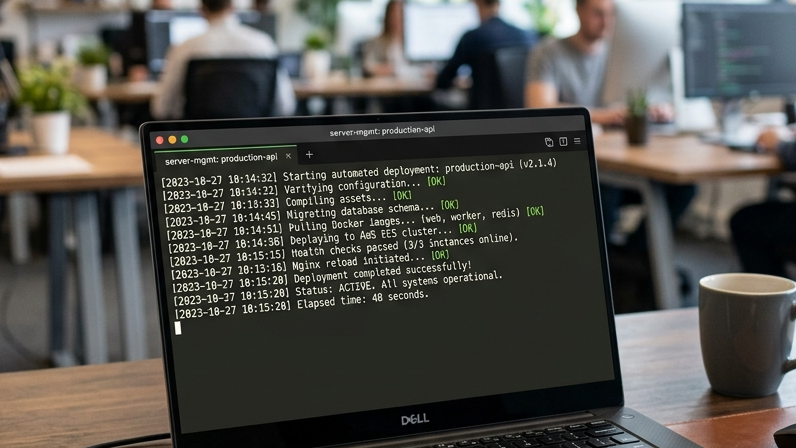

The GTEMAS approach: We implement agentic coding workflows using tools like Cursor and our own customized IDE extensions, configured with RAG access to the project's full codebase. This context-awareness is critical. A generic AI coding tool hallucinates functions that don't exist. Our tooling knows the codebase, understands the established patterns, and generates output that is consistent with the system it is extending.

- Boilerplate is near-free: CRUD APIs, authentication flows, UI components, database migration scripts - generated in seconds, reviewed by an engineer in minutes.

- Self-correcting builds: When a build fails, the AI analyzes the stack trace and suggests a corrective action before the developer has finished reading the error message. Debugging cycles compress from hours to minutes for the majority of common failure modes.

- Consistent code style: AI-generated code follows the project's established patterns, which reduces the cognitive load of code review and keeps the codebase coherent as it grows.

The net effect is that developers spend their time on the 40% of the job that required their expertise in the first place - and they do it without the constant context-switching that boilerplate work creates.

Phase 4: Quality Assurance (-40% Effort)

The traditional approach: QA is a bottleneck at the end of the sprint. Test cases are written manually, often by engineers who are already context-switching between multiple tickets. Coverage is inconsistent, edge cases are missed, and regression testing is a manual process that gets skipped under schedule pressure.

The GTEMAS approach: We practice Test-Driven Generation. AI generates unit tests before the code is written, based on the accepted requirements and the function signatures defined in the architecture phase. For integration and end-to-end testing, AI agents crawl the UI, identify interaction flows, and generate Playwright and Cypress test scripts automatically. Visual regression testing is built in - a screenshot comparison on every build, not just on release candidates.

The result is that we achieve greater than 90% test coverage by default, not as a stretch goal. More importantly, this coverage is maintained as the codebase evolves, because the test generation process is automated and runs continuously rather than being a one-time effort tied to the initial build.

Phase 5: Documentation and Handover (Near-Zero Incremental Effort)

The traditional approach: Documentation is always outdated. Engineers write it at the end of a project when they are already mentally moving to the next one. The result is documentation that describes what the system was supposed to do, not what it actually does. Handover sessions are long and still leave knowledge gaps.

The GTEMAS approach: Documentation is a continuous byproduct of the development process, not a separate phase. AI agents monitor git commits and automatically update the README, API documentation, and changelogs as the codebase evolves. When a function signature changes, the documentation reflects it within the same commit. When a new endpoint is added, it appears in the Swagger docs without manual intervention.

When we hand over a project, clients receive a living knowledge base that reflects the current state of the system - not a stale PDF from the original design phase. This materially reduces onboarding time for internal teams taking over the codebase and reduces the support burden on GTEMAS during the transition period.

The Business Impact: What This Means in Practice

The efficiency gains across each phase compound. A 30% reduction in requirements effort and a 50% reduction in coding effort do not simply average out to a 40% reduction in total project duration. They interact: cleaner requirements mean fewer rework cycles in coding; automated tests mean fewer defects to diagnose in QA; continuous documentation means shorter handovers.

For clients, this translates into three concrete outcomes:

- Faster time-to-market: Projects that previously required six months regularly reach initial production in three to four. MVPs that previously required eight weeks can reach user testing in two to three. The feedback loop with real users accelerates, which improves the quality of the final product.

- Lower total cost of ownership: AI-generated code that passes automated tests and is produced by senior engineers who understand what they are reviewing has demonstrably lower defect rates. Less technical debt accumulates. The system is cheaper to maintain two years after delivery than a system built the traditional way.

- Higher-value engineering engagement: Clients pay for architectural thinking, business domain expertise, and the judgment calls that determine whether a system will perform under real conditions. They do not pay for typing syntax. The billing model reflects this: we scope by deliverable and outcome, not by hours worked.

The Velocity Engine in Your Organization

The Velocity Engine is not a product. It is a methodology, and like any methodology, its value comes from consistent application across the full delivery lifecycle, not from cherry-picking the parts that are easy to adopt. Organizations that implement AI tooling in the coding phase without reforming their requirements and QA processes capture a fraction of the available gains.

GTEMAS applies this framework across our client engagements, adapting it to the specific technology stack, team structure, and governance requirements of each organization. For clients in regulated industries, this includes the audit trails and review documentation that compliance frameworks require. For clients running large existing codebases, it includes the codebase indexing and RAG configuration that makes AI output relevant and accurate rather than generic.

If your organization is evaluating how to integrate AI into its development practice - beyond the "buy Copilot licenses" level - we can run a structured assessment of your current SDLC and identify where the highest-impact interventions are. Reach out to the GTEMAS team to start that conversation.